I typically run compute jobs remotely using my M1 Macbook as a terminal. So, when PyTorch recently launched its backend compatibility with Metal on M1 chips, I was kind of interested to see what kind of GPU acceleration performance can be achieved. To make the process super easy, Anaconda also recently released an M1-native version. However, I had to do a fresh install of Anaconda to get it to work. (updating conda from conda did not work)

To install Anaconda with Apple-native Python: https://www.anaconda.com/blog/new-release-anaconda-distribution-now-supporting-m1

To install PyTorch with Metal GPU acceleration: https://pytorch.org/blog/introducing-accelerated-pytorch-training-on-mac/

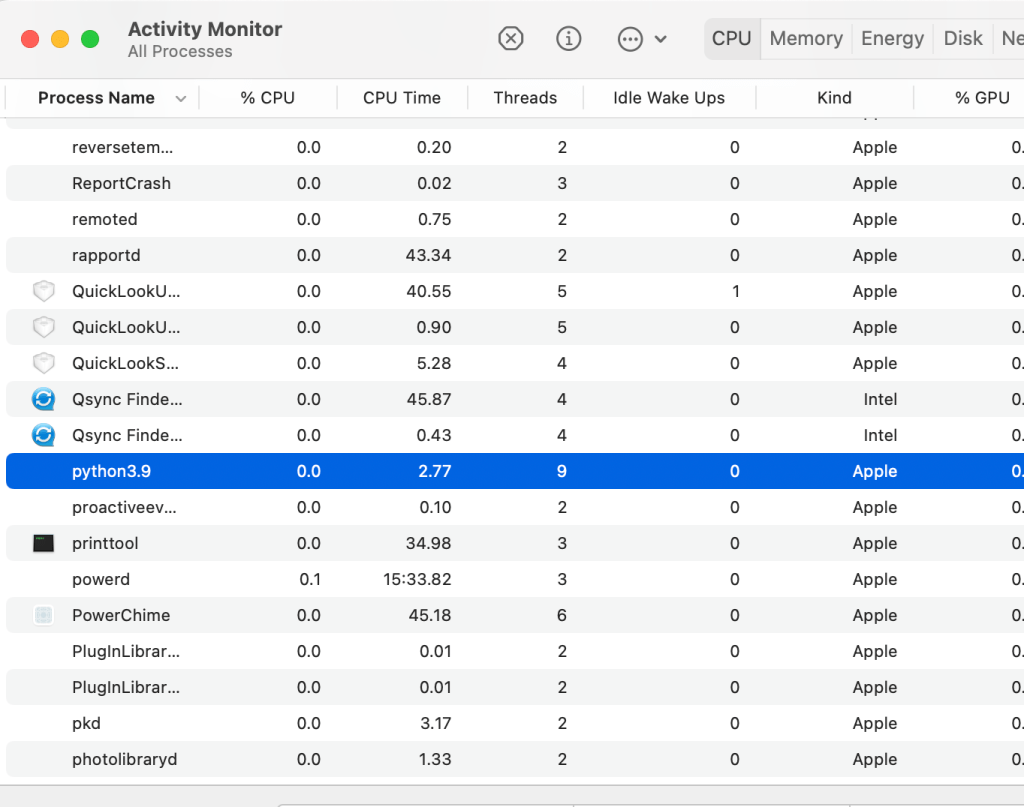

If installed, properly, you should be able to see that Python 3.9 is running as an Apple process in resource monitor:

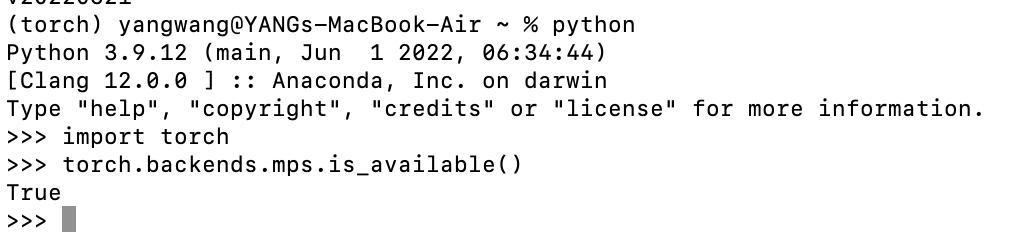

You should also see from PyTorch that ‘MPS’ backend is available:

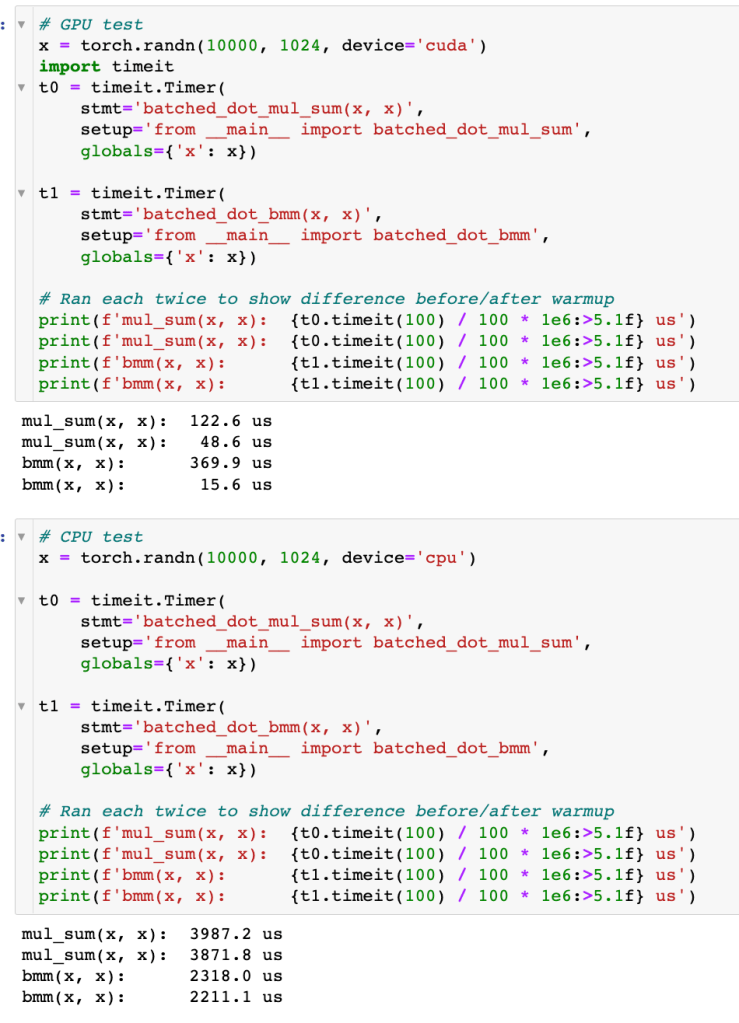

So, I just ran the simple dot product speed test on this setup using MPS vs. CPU as the compute device. After warm-up, it appears that the GPU accelerated batch multiplication is ~14x faster than the same CPU process on the M1 chip. Although, compared to the un-batched dot product on CPU, the GPU accelerated batch dot product is only 4.3x faster. Certainly there’s a performance boost, but nothing to write home about.

Running the same comparison on my home workstation (RTX6000 GPU and Xeon2155 10c chip), I get the following results. The GPU accelerated batch dot product speeds up CPU version by more than 140x!

So, the lesson is, don’t run deep learning tasks locally on your laptop (duh!). If you must, GPU acceleration will give you some tangible speedups. Also, the M1 native Python install should generally be faster and help conserve battery.